- Blog

- Planetbase gm tomato

- Update generic pnp monitor

- Toro ccr 2000 snowblower

- Anonymous gmail hacker

- What is globaltis

- Chiff and fipple killarney

- Octane render lightwave

- Infinite stratos s2 ep 9

- Itools discord

- Rihanna rehab ft

- World conqueror 4 mod big map

- Windows 8-1 pro lite x86 iso

- Ic-7800 if tap sdr

- Import webscraper json

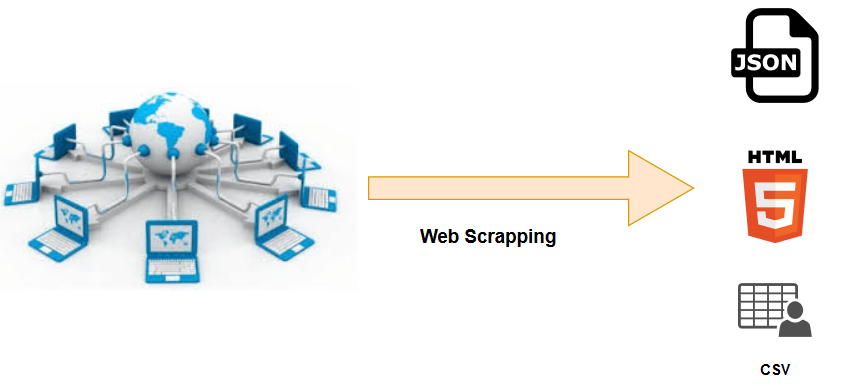

Having said that, let’s go back to our Python project. I have made some attempts, we can just add the word after the static portion and avoid the ?q=blabla thing. Whatever we write after the static portion will be interpreted by the website as a research, like the one described before. The red portion is responsible for the magic trick. If you are slightly smart you should have already realized how this URL is composed. In order to start scraping the web we have to create a Python project and import the following libraries: requests for HTTP requests, pprint to prettify our debug logs and BeautifulSoup, we will use it to parse HTML pages. I used Python because it is easy to use and read, and, not only in my opinion, the best tool to develop a web scraping program. It’s possible to avoid bot detection but it needs a lot of effort, hundreds of proxy servers, and honestly I do not really like the cost benefits. An example could be a program that notifies you when a new Thinkpad appears on Ebay or when the price of a product in your Amazon wishlist decreases.Īctually I have tried to develop an Amazon price tracking service but, unfortunately, Amazon has a vested interest in protecting its data and some basic anti-scraping measures in place. Web scraping is a technique used to extract data from websites. With this in mind, I understood that web scraping was the right way.

#IMPORT WEBSCRAPER JSON FREE#

I started by looking for a dictionary REST API with the purpose of reusing a simple Node.js project that I have developed one year ago but I was not able to find any free dictionary API service.

Then I had the idea: create a Telegram bot that sending a word sends back a message with its pronunciation and meaning. It was really frustrating to browse the web every time. An example of annoying stuff for me was to check on the web the meaning of words while I was reading a slightly difficult book. If you liked this walkthrough, subscribe to my mailing list to get notified whenever I make new posts.Automating annoying stuff is essentially what developers do for themselves and others everyday. For more on web scrapers, read the documentation for the libraries or on youtube. With that, you have your simple web scraper up and running. With this information, we can then comfortably append the dictionary object to the initialized result list set before our for loop.įinally, store the python list in a JSON file by the name "books.json" with an indent of 4 for readability purposes. The extracted elements are then stored in respective variables which we'll put in a dictionary. append ( single ) with open ( ' books.json ', ' w ' ) as f: json. json - we'll use this to store the extracted information to a JSON file.Ģ.requests - will be used to make Http requests to the webpage.The python libraries perform the following tasks. Importing libraries import requests import json from bs4 import BeautifulSoup

#IMPORT WEBSCRAPER JSON CODE#

We'll extract the title, rating, link to more information about the book and the cover image of the book.įind the code on github. Which you can use to practise your web scraping. In this tutorial, we'll be extracting data from books to scrape Lewis10, you'll get a 10% discount off your first purchase! Although you can use it with both BeautifulSoup and selenium.

For more on its usage, check out my post on web scraping with scrapy.

In addition to this, they provide CAPTCHA handling for you as well as enabling a headless browser so that you'll appear to be a real user and not get detected as a web scraper. Boasting over 20 million IP addresses and unlimited bandwidth. They utilize IP rotation so you can avoid detection. I partnered with scraper API, a startup specializing in strategies that'll ease the worry of your IP address from being blocked while web scraping. Other problems one might encounter while web scraping is the possibility of your IP address being blacklisted. To check for the file, simply type the base URL followed by "/robots.txt"įor more about robots.txt files this post should provide better incite. This file tells if the website allows scraping or if they do not. robots.txt fileĮnsure that you check the robots.txt file of a website before making your scrapper. It's not the only means to achieve this task.īefore starting to web scrape, find out if the page you seek to extract data from provides an API. Though web scraping is a useful tool in extracting data from a website, In this walkthrough, we'll be storing our data in a JSON file. We can use web scraping to gather unstructured data from the internet, process it and store it in a structured format. This is the process of extracting information from a webpage by taking advantage of patterns in the web page's underlying code.